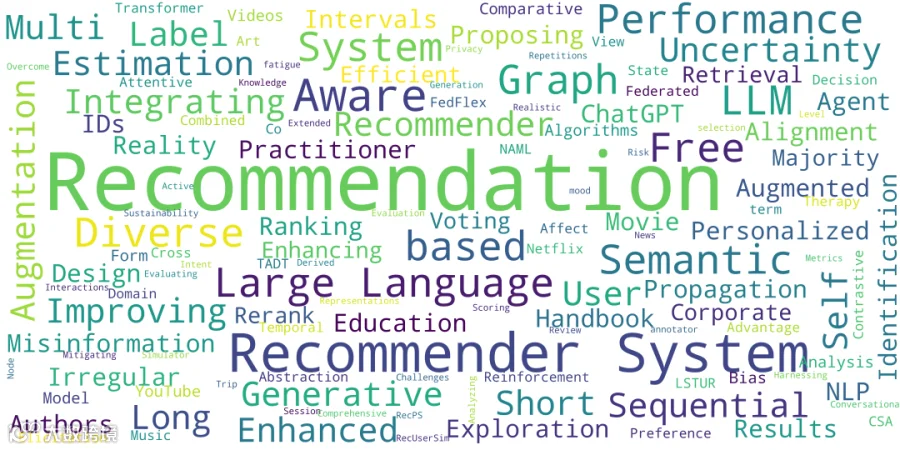

本文精选了上周(0728-0803)最新发布的12篇推荐系统相关论文,主要研究方向包括推荐模型自我意识、生成式推荐、大模型图推荐、大模型强化学习推荐、跨域推荐、新闻推荐、旅行推荐、对话推荐、基于推荐的隐私风险打分、推荐中的图生成等。

-

1. Are Recommenders Self-Aware? Label-Free Recommendation Performance Estimation via Model Uncertainty -

2. Generative Recommendation with Semantic IDs: A Practitioner's Handbook -

3. Enhancing Graph-based Recommendations with Majority-Voting LLM-Rerank Augmentation -

4. Large Language Model-Enhanced Reinforcement Learning for Diverse and Novel Recommendations -

5. Affect-aware Cross-Domain Recommendation for Art Therapy via Music Preference Elicitation -

6. TADT-CSA: Temporal Advantage Decision Transformer with Contrastive State Abstraction for Generative Recommendation -

7. Co-NAML-LSTUR: A Combined Model with Attentive Multi-View Learning and Long- and Short-term User Representations for News Recommendation -

8. Analyzing and Mitigating Repetitions in Trip Recommendation -

9. RecUserSim: A Realistic and Diverse User Simulator for Evaluating Conversational Recommender Systems -

10. RecPS: Privacy Risk Scoring for Recommender Systems -

11. A Comprehensive Review on Harnessing Large Language Models to Overcome Recommender System Challenges -

12. Beyond Interactions: Node-Level Graph Generation for Knowledge-Free Augmentation in Recommender Systems

1. Are Recommenders Self-Aware? Label-Free Recommendation Performance Estimation via Model Uncertainty

Jiayu Li, Ziyi Ye, Guohao Jian, Zhiqiang Guo, Weizhi Ma, Qingyao Ai, Min Zhang

https://arxiv.org/abs/2507.23208

Can a recommendation model be self-aware? This paper investigates the recommender's self-awareness by quantifying its uncertainty, which provides a label-free estimation of its performance. Such self-assessment can enable more informed understanding and decision-making before the recommender engages with any users. To this end, we propose an intuitive and effective method, probability-based List Distribution uncertainty (LiDu). LiDu measures uncertainty by determining the probability that a recommender will generate a certain ranking list based on the prediction distributions of individual items. We validate LiDu's ability to represent model self-awareness in two settings: (1) with a matrix factorization model on a synthetic dataset, and (2) with popular recommendation algorithms on real-world datasets. Experimental results show that LiDu is more correlated with recommendation performance than a series of label-free performance estimators. Additionally, LiDu provides valuable insights into the dynamic inner states of models throughout training and inference. This work establishes an empirical connection between recommendation uncertainty and performance, framing it as a step towards more transparent and self-evaluating recommender systems.

2. Generative Recommendation with Semantic IDs: A Practitioner's Handbook

Clark Mingxuan Ju, Liam Collins, Leonardo Neves, Bhuvesh Kumar, Louis Yufeng Wang, Tong Zhao, Neil Shah

https://arxiv.org/abs/2507.22224

Generative recommendation (GR) has gained increasing attention for its promising performance compared to traditional models. A key factor contributing to the success of GR is the semantic ID (SID), which converts continuous semantic representations (e.g., from large language models) into discrete ID sequences. This enables GR models with SIDs to both incorporate semantic information and learn collaborative filtering signals, while retaining the benefits of discrete decoding. However, varied modeling techniques, hyper-parameters, and experimental setups in existing literature make direct comparisons between GR proposals challenging. Furthermore, the absence of an open-source, unified framework hinders systematic benchmarking and extension, slowing model iteration. To address this challenge, our work introduces and open-sources a framework for Generative Recommendation with semantic ID, namely GRID, specifically designed for modularity to facilitate easy component swapping and accelerate idea iteration. Using GRID, we systematically experiment with and ablate different components of GR models with SIDs on public benchmarks. Our comprehensive experiments with GRID reveal that many overlooked architectural components in GR models with SIDs substantially impact performance. This offers both novel insights and validates the utility of an open-source platform for robust benchmarking and GR research advancement. GRID is open-sourced at https://github.com/snap-research/GRID

3. Enhancing Graph-based Recommendations with Majority-Voting LLM-Rerank Augmentation

Minh-Anh Nguyen, Bao Nguyen, Ha Lan N.T., Tuan Anh Hoang, Duc-Trong Le, Dung D. Le

https://arxiv.org/abs/2507.21563

Recommendation systems often suffer from data sparsity caused by limited user-item interactions, which degrade their performance and amplify popularity bias in real-world scenarios. This paper proposes a novel data augmentation framework that leverages Large Language Models (LLMs) and item textual descriptions to enrich interaction data. By few-shot prompting LLMs multiple times to rerank items and aggregating the results via majority voting, we generate high-confidence synthetic user-item interactions, supported by theoretical guarantees based on the concentration of measure. To effectively leverage the augmented data in the context of a graph recommendation system, we integrate it into a graph contrastive learning framework to mitigate distributional shift and alleviate popularity bias. Extensive experiments show that our method improves accuracy and reduces popularity bias, outperforming strong baselines.

4. Large Language Model-Enhanced Reinforcement Learning for Diverse and Novel Recommendations

Jiin Woo, Alireza Bagheri Garakani, Tianchen Zhou, Zhishen Huang, Yan Gao

https://arxiv.org/abs/2507.21274

In recommendation systems, diversity and novelty are essential for capturing varied user preferences and encouraging exploration, yet many systems prioritize click relevance. While reinforcement learning (RL) has been explored to improve diversity, it often depends on random exploration that may not align with user interests. We propose LAAC (LLM-guided Adversarial Actor Critic), a novel method that leverages large language models (LLMs) as reference policies to suggest novel items, while training a lightweight policy to refine these suggestions using system-specific data. The method formulates training as a bilevel optimization between actor and critic networks, enabling the critic to selectively favor promising novel actions and the actor to improve its policy beyond LLM recommendations. To mitigate overestimation of unreliable LLM suggestions, we apply regularization that anchors critic values for unexplored items close to well-estimated dataset actions. Experiments on real-world datasets show that LAAC outperforms existing baselines in diversity, novelty, and accuracy, while remaining robust on imbalanced data, effectively integrating LLM knowledge without expensive fine-tuning.

5. Affect-aware Cross-Domain Recommendation for Art Therapy via Music Preference Elicitation

Bereket A. Yilma, Luis A. Leiva

https://arxiv.org/abs/2507.21120

Art Therapy (AT) is an established practice that facilitates emotional processing and recovery through creative expression. Recently, Visual Art Recommender Systems (VA RecSys) have emerged to support AT, demonstrating their potential by personalizing therapeutic artwork recommendations. Nonetheless, current VA RecSys rely on visual stimuli for user modeling, limiting their ability to capture the full spectrum of emotional responses during preference elicitation. Previous studies have shown that music stimuli elicit unique affective reflections, presenting an opportunity for cross-domain recommendation (CDR) to enhance personalization in AT. Since CDR has not yet been explored in this context, we propose a family of CDR methods for AT based on music-driven preference elicitation. A large-scale study with 200 users demonstrates the efficacy of music-driven preference elicitation, outperforming the classic visual-only elicitation approach. Our source code, data, and models are available at https://github.com/ArtAICare/Affect-aware-CDR

6. TADT-CSA: Temporal Advantage Decision Transformer with Contrastive State Abstraction for Generative Recommendation

Xiang Gao, Tianyuan Liu, Yisha Li, Jingxin Liu, Lexi Gao, Xin Li, Haiyang Lu, Liyin Hong

https://arxiv.org/abs/2507.20327

With the rapid advancement of Transformer-based Large Language Models (LLMs), generative recommendation has shown great potential in enhancing both the accuracy and semantic understanding of modern recommender systems. Compared to LLMs, the Decision Transformer (DT) is a lightweight generative model applied to sequential recommendation tasks. However, DT faces challenges in trajectory stitching, often producing suboptimal trajectories. Moreover, due to the high dimensionality of user states and the vast state space inherent in recommendation scenarios, DT can incur significant computational costs and struggle to learn effective state representations. To overcome these issues, we propose a novel Temporal Advantage Decision Transformer with Contrastive State Abstraction (TADT-CSA) model. Specifically, we combine the conventional Return-To-Go (RTG) signal with a novel temporal advantage (TA) signal that encourages the model to capture both long-term returns and their sequential trend. Furthermore, we integrate a contrastive state abstraction module into the DT framework to learn more effective and expressive state representations. Within this module, we introduce a TA-conditioned State Vector Quantization (TAC-SVQ) strategy, where the TA score guides the state codebooks to incorporate contextual token information. Additionally, a reward prediction network and a contrastive transition prediction (CTP) network are employed to ensure the state codebook preserves both the reward information of the current state and the transition information between adjacent states. Empirical results on both public datasets and an online recommendation system demonstrate the effectiveness of the TADT-CSA model and its superiority over baseline methods.

7. Co-NAML-LSTUR: A Combined Model with Attentive Multi-View Learning and Long- and Short-term User Representations for News Recommendation

Minh Hoang Nguyen, Thuat Thien Nguyen, Minh Nhat Ta

https://arxiv.org/abs/2507.20210

News recommendation systems play a vital role in mitigating information overload by delivering personalized news content. A central challenge is to effectively model both multi-view news representations and the dynamic nature of user interests, which often span both short- and long-term preferences. Existing methods typically rely on single-view features of news articles (e.g., titles or categories) or fail to comprehensively capture user preferences across time scales. In this work, we propose Co-NAML-LSTUR, a hybrid news recommendation framework that integrates NAML for attentive multi-view news modeling and LSTUR for capturing both long- and short-term user representations. Our model also incorporates BERT-based word embeddings to enhance semantic feature extraction. We evaluate Co-NAML-LSTUR on two widely used benchmarks, MIND-small and MIND-large. Experimental results show that Co-NAML-LSTUR achieves substantial improvements over most state-of-the-art baselines on MIND-small and MIND-large, respectively. These results demonstrate the effectiveness of combining multi-view news representations with dual-scale user modeling. The implementation of our model is publicly available at https://github.com/MinhNguyenDS/Co-NAML-LSTUR

8. Analyzing and Mitigating Repetitions in Trip Recommendation

Wenzheng Shu, Kangqi Xu, Wenxin Tai, Ting Zhong, Yong Wang, Fan Zhou

https://arxiv.org/abs/2507.19798

Trip recommendation has emerged as a highly sought-after service over the past decade. Although current studies significantly understand human intention consistency, they struggle with undesired repetitive outcomes that need resolution. We make two pivotal discoveries using statistical analyses and experimental designs: (1) The occurrence of repetitions is intricately linked to the models and decoding strategies. (2) During training and decoding, adding perturbations to logits can reduce repetition. Motivated by these observations, we introduce AR-Trip (Anti Repetition for Trip Recommendation), which incorporates a cycle-aware predictor comprising three mechanisms to avoid duplicate Points-of-Interest (POIs) and demonstrates their effectiveness in alleviating repetition. Experiments on four public datasets illustrate that AR-Trip successfully mitigates repetition issues while enhancing precision.

9. RecUserSim: A Realistic and Diverse User Simulator for Evaluating Conversational Recommender Systems

Luyu Chen, Quanyu Dai, Zeyu Zhang, Xueyang Feng, Mingyu Zhang, Pengcheng Tang, Xu Chen, Yue Zhu, Zhenhua Dong

https://arxiv.org/abs/2507.22897

Conversational recommender systems (CRS) enhance user experience through multi-turn interactions, yet evaluating CRS remains challenging. User simulators can provide comprehensive evaluations through interactions with CRS, but building realistic and diverse simulators is difficult. While recent work leverages large language models (LLMs) to simulate user interactions, they still fall short in emulating individual real users across diverse scenarios and lack explicit rating mechanisms for quantitative evaluation. To address these gaps, we propose RecUserSim, an LLM agent-based user simulator with enhanced simulation realism and diversity while providing explicit scores. RecUserSim features several key modules: a profile module for defining realistic and diverse user personas, a memory module for tracking interaction history and discovering unknown preferences, and a core action module inspired by Bounded Rationality theory that enables nuanced decision-making while generating more fine-grained actions and personalized responses. To further enhance output control, a refinement module is designed to fine-tune final responses. Experiments demonstrate that RecUserSim generates diverse, controllable outputs and produces realistic, high-quality dialogues, even with smaller base LLMs. The ratings generated by RecUserSim show high consistency across different base LLMs, highlighting its effectiveness for CRS evaluation.

10. RecPS: Privacy Risk Scoring for Recommender Systems

Jiajie He, Yuechun Gu, Keke Chen

https://arxiv.org/abs/2507.18365

Recommender systems (RecSys) have become an essential component of many web applications. The core of the system is a recommendation model trained on highly sensitive user-item interaction data. While privacy-enhancing techniques are actively studied in the research community, the real-world model development still depends on minimal privacy protection, e.g., via controlled access. Users of such systems should have the right to choose not to share highly sensitive interactions. However, there is no method allowing the user to know which interactions are more sensitive than others. Thus, quantifying the privacy risk of RecSys training data is a critical step to enabling privacy-aware RecSys model development and deployment. We propose a membership-inference attack (MIA)- based privacy scoring method, RecPS, to measure privacy risks at both the interaction and user levels. The RecPS interaction-level score definition is motivated and derived from differential privacy, which is then extended to the user-level scoring method. A critical component is the interaction-level MIA method RecLiRA, which gives high-quality membership estimation. We have conducted extensive experiments on well-known benchmark datasets and RecSys models to show the unique features and benefits of RecPS scoring in risk assessment and RecSys model unlearning.

11. A Comprehensive Review on Harnessing Large Language Models to Overcome Recommender System Challenges

Rahul Raja, Anshaj Vats, Arpita Vats, Anirban Majumder

https://arxiv.org/abs/2507.21117

Recommender systems have traditionally followed modular architectures comprising candidate generation, multi-stage ranking, and re-ranking, each trained separately with supervised objectives and hand-engineered features. While effective in many domains, such systems face persistent challenges including sparse and noisy interaction data, cold-start problems, limited personalization depth, and inadequate semantic understanding of user and item content. The recent emergence of Large Language Models (LLMs) offers a new paradigm for addressing these limitations through unified, language-native mechanisms that can generalize across tasks, domains, and modalities. In this paper, we present a comprehensive technical survey of how LLMs can be leveraged to tackle key challenges in modern recommender systems. We examine the use of LLMs for prompt-driven candidate retrieval, language-native ranking, retrieval-augmented generation (RAG), and conversational recommendation, illustrating how these approaches enhance personalization, semantic alignment, and interpretability without requiring extensive task-specific supervision. LLMs further enable zero- and few-shot reasoning, allowing systems to operate effectively in cold-start and long-tail scenarios by leveraging external knowledge and contextual cues. We categorize these emerging LLM-driven architectures and analyze their effectiveness in mitigating core bottlenecks of conventional pipelines. In doing so, we provide a structured framework for understanding the design space of LLM-enhanced recommenders, and outline the trade-offs between accuracy, scalability, and real-time performance. Our goal is to demonstrate that LLMs are not merely auxiliary components but foundational enablers for building more adaptive, semantically rich, and user-centric recommender systems

12. Beyond Interactions: Node-Level Graph Generation for Knowledge-Free Augmentation in Recommender Systems

Zhaoyan Wang, Hyunjun Ahn, In-Young Ko

https://arxiv.org/abs/2507.20578

Recent advances in recommender systems rely on external resources such as knowledge graphs or large language models to enhance recommendations, which limit applicability in real-world settings due to data dependency and computational overhead. Although knowledge-free models are able to bolster recommendations by direct edge operations as well, the absence of augmentation primitives drives them to fall short in bridging semantic and structural gaps as high-quality paradigm substitutes. Unlike existing diffusion-based works that remodel user-item interactions, this work proposes NodeDiffRec, a pioneering knowledge-free augmentation framework that enables fine-grained node-level graph generation for recommendations and expands the scope of restricted augmentation primitives via diffusion. By synthesizing pseudo-items and corresponding interactions that align with the underlying distribution for injection, and further refining user preferences through a denoising preference modeling process, NodeDiffRec dramatically enhances both semantic diversity and structural connectivity without external knowledge. Extensive experiments across diverse datasets and recommendation algorithms demonstrate the superiority of NodeDiffRec, achieving State-of-the-Art (SOTA) performance, with maximum average performance improvement 98.6% in Recall@5 and 84.0% in NDCG@5 over selected baselines.

欢迎各位作者投稿近期有关推荐系统录用的顶级会议和期刊优秀文章解读,我们将竭诚为您宣传, 共同学习进步。如有意愿, 请通过后台私信与我们联系。

欢迎干货投稿 \ 论文宣传 \ 合作交流

由于公众号试行乱序推送,您可能不再准时收到机器学习与推荐算法的推送。为了第一时间收到本号的干货内容, 请将本号设为星标,以及常点文末右下角的“在看”。

由于公众号试行乱序推送,您可能不再准时收到机器学习与推荐算法的推送。为了第一时间收到本号的干货内容, 请将本号设为星标,以及常点文末右下角的“在看”。

![论文周报[0728-0803] | 推荐系统领域最新研究进展(12篇)](https://cdn.10100.com/user/2c84fe3b953b44ac92a38f29f2cfb02e_180x.png)